OpenAI WebSockets

OpenAI brings WebSockets to the Realtime API: persistent, event-driven streaming for faster AI agents with text, audio, and tool calls.

OpenAI WebSockets: why AI agents can suddenly run much faster and "in real-time"

AI agents only become truly useful when they respond like humans do: immediately, fluidly, and without "waiting for the next request". OpenAI makes a significant leap in this area with WebSockets in the Realtime API.

What has been launched?

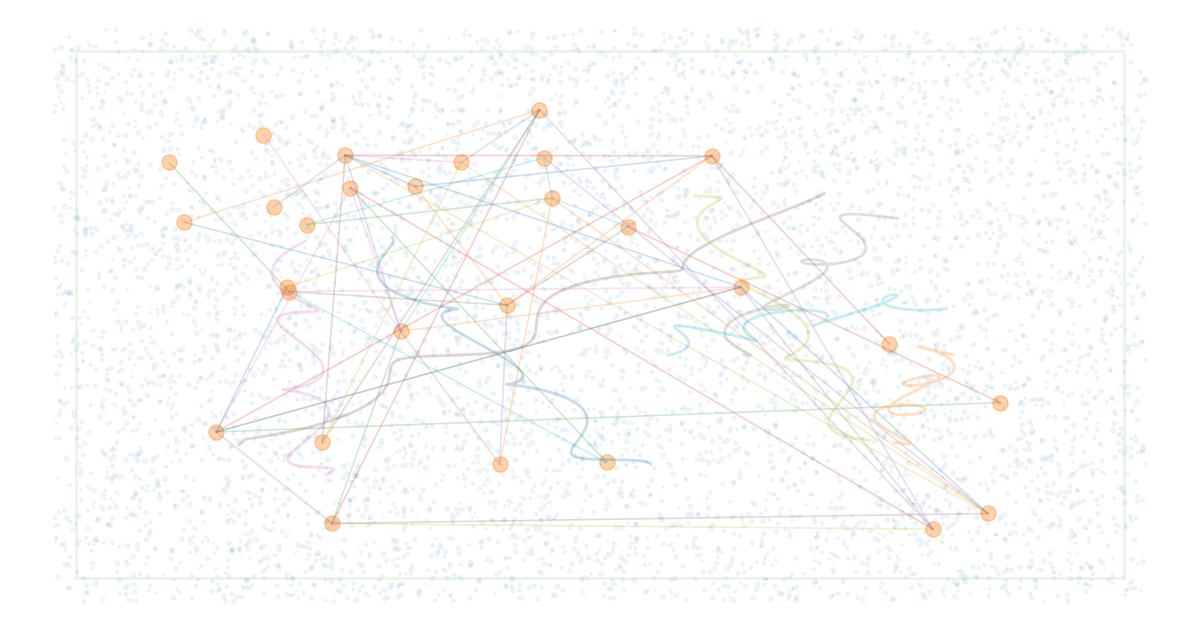

OpenAI has added support for WebSockets (and other transports like WebRTC) in the Realtime API, allowing you to establish a persistent, bidirectional connection with a real-time model. Instead of making a new HTTP call each time, the connection remains open, and the client and model continuously exchange events.

Why WebSockets make such a difference for AI agents

Most "classic" AI integrations work through the request/response pattern:

- Your app sends a request (prompt + context).

- You wait for a response.

- For the next step, you repeat this process.

This works well for chat, but agents often have a different dynamic: they think, plan, call tools, ask follow-up questions, and stream output in the meantime. With WebSockets, you can make that agent interaction much more natural:

- Lower latency due to less connection overhead.

- Streaming of tokens (and in real-time also audio) while the model is still processing.

- Event-driven orchestration (client events and server events) instead of "polling".

- Long-running sessions where you manage state and configuration neatly.

Realtime = more than "faster chatting"

The Realtime API is explicitly designed for multi-modal, low-latency interactions. Think of applications where you really want a fluid conversation:

- Voice assistants that input and output speech without interruptions.

- Live co-pilots (support, sales, coaching) that perform actions while talking.

- Realtime dashboards where the agent continuously interprets updates and responds.

How does it work technically (high-level)?

In the Realtime API, you work with a continuous stream of events:

- Client events: for example, you send session configuration, text input, audio blocks, or instructions for tool/function calling.

- Server events: the model sends back tokens, (audio) output, status updates, and completion signals.

This event model is important: it makes agents "alive" and interactive, as you don't have to wait for a single completed response before taking the next step.

WebSockets vs WebRTC: when to choose what?

In practice, you often see this pattern:

- WebRTC: ideal for client-side real-time audio (browser/mobile) where ultra-low latency is crucial.

- WebSockets: very strong for server-to-server, middle-tier services, and general real-time event streams (text, audio, tool events) with a simple and stable integration.

What does this concretely mean for your agent architecture?

With WebSockets, you can structure your agent smarter:

- Session-first: you start a session, set up settings and tools, and keep it live.

- Continuous streaming: output (text/audio) comes in while the model is still generating.

- Faster tool loops: tool calls and results can return to the model in the same open connection.

- Better UX: fewer "thinking pauses", more fluid conversation.

What should you pay attention to?

- State management: for long-running sessions, you want to explicitly determine what to retain, reset, or summarize.

- Backpressure & retries: streaming requires robust handling of network fluctuations.

- Observability: log events (without sensitive content) so you can debug latencies and agent loops.

Conclusion

With WebSockets in the Realtime API, AI agents gain an infrastructure that fits how agents really work: continuous, event-driven, and streaming. The result is not only "faster", but above all: more human in interaction and more powerful in workflow automation.

Sources

- OpenAI API Docs – Realtime API (overview)

- OpenAI API Docs – Realtime API via WebSocket

- OpenAI – Introducing the Realtime API

- OpenAI Agents SDK Docs – Realtime WebSocket transport